Disclaimer: Opinions expressed are solely my own and do not reflect the views or opinions of my employer or any other affiliated entities. Any sponsored content featured on this blog is independent and does not imply endorsement by, nor relationship with, my employer or affiliated organisations.

If you've been following this blog, you know the story so far. 2024 gave us AI copilots that summarized alerts but took no action. 2025 proved AI could actually triage, investigate, and reach verdicts at machine speed. The "AI Analyst" became a real product category.

My take: we automated the easy part.

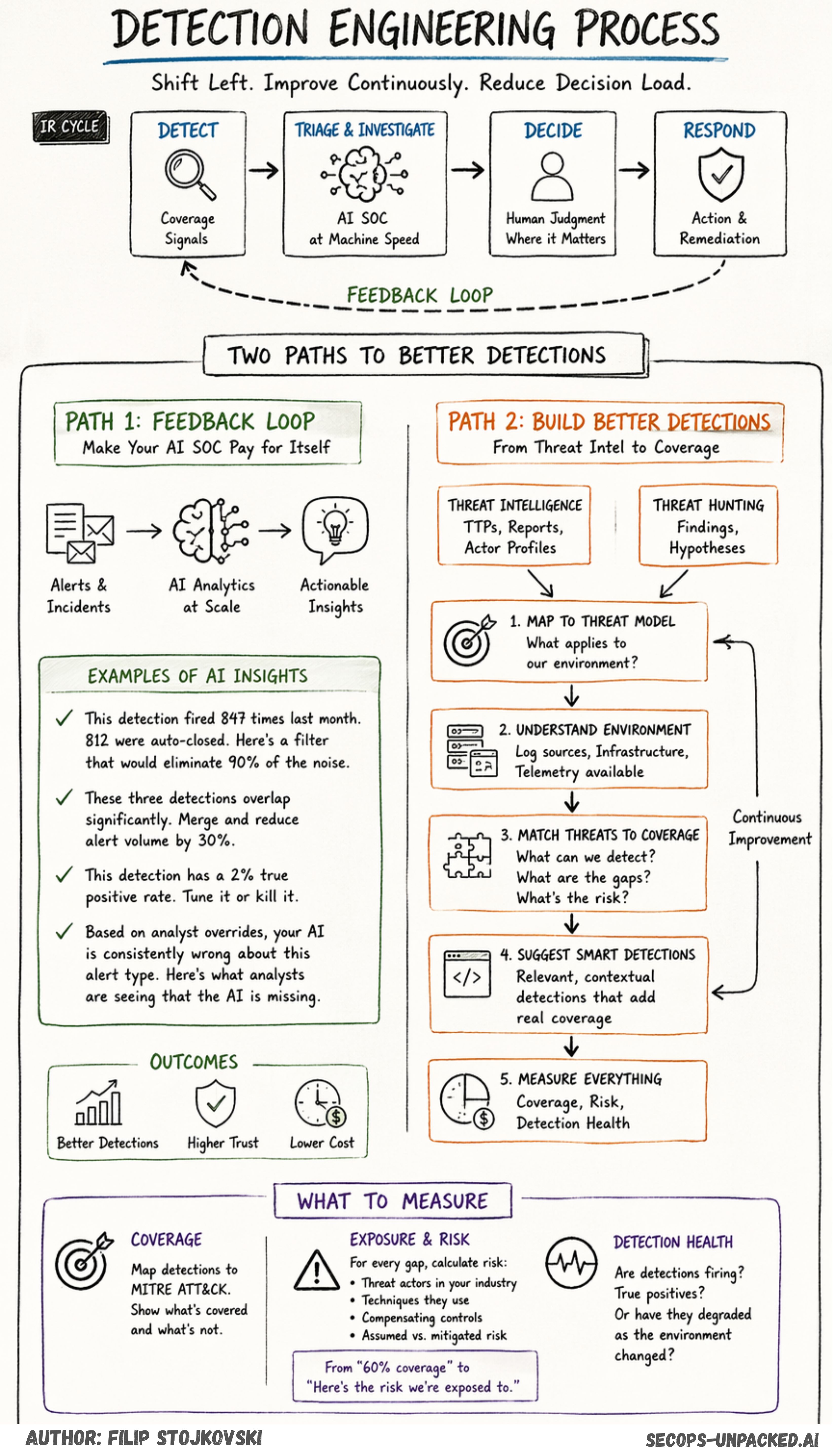

The industry started in the middle of the IR cycle. Triage is analytically complex but operationally simple. No write access required. No change management. No risk of breaking production. It was the perfect first target. Detection engineering sits left of that, tangled in log pipelines and alert volume constraints. Response sits right, blocked by API limitations and organizational risk aversion.

And now we have a new problem.

The Decision Load Tripled

Faster detection and better triage surface more decisions that humans need to make. Before AI, a SOC might process 200 alerts daily and make 50 meaningful decisions. Now AI surfaces 2,000 alerts, auto-closes 1,700, and escalates 300 that require human judgment.

Detection speed improved. Decision velocity did not.

If you read my post on how AI transforms detection engineering, you know I talked about the shift from precision-optimized detection to coverage-optimized detection. The idea is simple: when AI handles triage at scale, you can deploy the detections you always wanted. Broader rules, more coverage, let the AI sort through the noise.

That's true. But it created a side effect nobody planned for.

More coverage means more signals. More signals means more escalations. More escalations means more human decisions. And if those detections are noisy, poorly tuned, or written under the "Fear of Not Doing Enough" (yeah, that post still haunts me), your AI SOC is just processing garbage faster.

Just because AI can investigate and triage alerts faster doesn't mean we should feed it bad detections. We don't want noise detections burning through tokens the same way we didn't want noise detections burning through SIEM licenses. Different cost center, same problem.

Think about it. With traditional SOAR, bad detections cost you analyst hours. With AI SOC, bad detections cost you tokens, compute, and worst of all, they erode trust in the system. Your analysts see the AI confidently closing garbage alerts and start questioning whether it's also confidently closing real threats. That's how you kill adoption.

2026 Will Test the Shift Left

This year will test whether AI can shift left into detection and shift right into response. Not just reorganize the human decision burden but actually reduce it.

I think the shift left is quite important right now. And there are two ways to approach it.

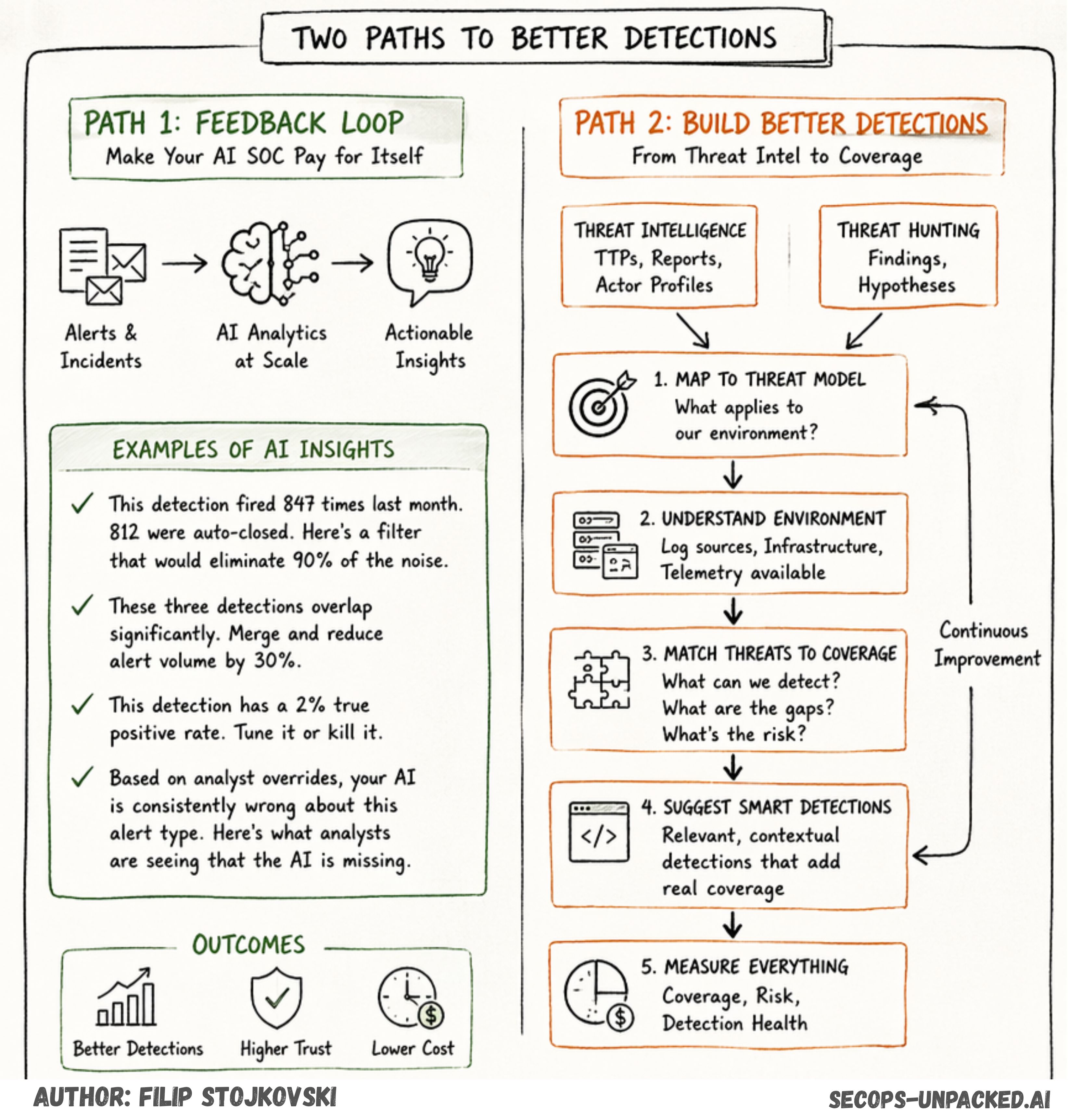

Path 1: The Feedback Loop (Your AI SOC Should Pay for Itself)

If your AI SOC is not giving you suggestions on how to improve your detections, it's designed to charge you more. Full stop.

I've been preaching about feedback loops since the DSAEM days. Detection > SOP > Automation > Emulation > Metrics. The loop is what makes the whole thing work. Without it, you're just driving faster into a wall.

I don't think the AI should give you a one-to-one suggestion for every single alert. That's noise on top of noise. What I see working better is a periodic review. Weekly or monthly. The AI goes over your alerts and incidents, runs analytics across the dataset, and comes back with suggestions.

Things like:

"This detection fired 847 times last month. 812 were auto-closed as benign. Here's a filter that would eliminate 90% of the noise without reducing true positive coverage."

"These three detections overlap significantly. You could merge them into one with better context and reduce your alert volume by 30%."

"This detection has a 2% true positive rate. Either tune it or kill it."

"Based on analyst overrides, your AI is consistently wrong about this alert type. Here's what the analysts are seeing that the AI is missing."

That last one is gold. When analysts override AI decisions, that's training data. Not just for the AI model, but for your detection engineering program. If the AI keeps getting a specific detection wrong, maybe the detection itself is the problem.

The feedback loop isn't just about making the AI smarter. It's about making your detections smarter. The AI sees patterns across thousands of alerts that no human analyst has time to analyze. Use that.

If your AI SOC vendor doesn't offer this, ask why. Because from where I stand, an AI SOC that doesn't feed back into detection improvement is just an expensive alert processor. And we already had one of those. It was called SOAR.

Path 2: Building Better Detections from Scratch

The feedback loop fixes existing detections. But what about new ones? How do you build better detections from the start?

This is the harder problem. And it's where most teams are still stuck in manual mode.

Let me break down how I think about it.

Where New Detections Come From

In most organizations, new detections come from two places:

Threat Intelligence. You get a feed. IOCs, TTPs, reports about new attack techniques. That intel should feed into your Threat Profile and Threat Modeling. Not every piece of intel is relevant to you. The question is always: does this threat apply to my environment, my industry, my infrastructure?

Threat Hunting. Your hunters go looking for things your detections missed. When they find something, that finding should become a detection. In many cases, threat hunting is also TI-led. The hunter reads a report about a new technique, goes looking for it in the environment, and either confirms or denies its presence.

So the flow looks like this: Threat Intel feeds into both Threat Modeling and Threat Hunting. The outcomes of those processes produce detection suggestions.

Simple enough on paper. In practice? Total mess.

Here's where it falls apart

You get a detection suggestion. Maybe it's a Sigma rule from a community feed. Maybe your hunter wrote it after finding something interesting. The question is: should I implement this?

To answer that, you need to know:

What log sources do I actually have?

What infrastructure am I running?

Do I even have the telemetry to detect this technique?

If I implement this detection, what coverage does it give me?

What's the gap if I don't implement it?

Most teams answer these questions from memory. Or they don't answer them at all. They just implement the detection and hope for the best. That's the "Fear of Not Doing Enough" in action. Write the detection, push it to production, deal with the consequences later.

This Is Where AI Should Help

An AI-powered or agentic detection engineering platform should handle exactly this workflow:

1. Ingest your threat intelligence. Not just IOCs. TTPs, actor profiles, campaign reports. Map them to your threat model automatically.

2. Understand your environment. Know what log sources you have, what infrastructure you're running, what telemetry is available. This is the foundation. Without it, everything else is guessing.

3. Match threats to coverage. Based on your threat profile and your available telemetry, show me what I can detect and what I can't. Where are the gaps? What's the risk of those gaps?

4. Suggest detections that make sense. Not generic Sigma rules dumped into a folder. Detections that are relevant to my environment, my log sources, my infrastructure. With the context of what coverage they'll add and what risk they'll reduce.

5. Measure everything. Coverage mapping, risk exposure, detection health. The whole picture.

What to Measure

Since we all love our MITRE ATT&CK bingo cards (don't pretend you don't), coverage mapping is the obvious starting point. But it can't stop there.

Coverage. Map your detections against ATT&CK techniques. Show me what's covered and what's not. Yes, it's bingo. But it's useful bingo when you combine it with the next piece.

Exposure and Risk. For every gap in coverage, I need a way to calculate risk. Not some abstract risk score pulled from thin air. Something grounded in: what threat actors target my industry? What techniques do they use? Do I have compensating controls? What's assumed risk versus mitigated risk?

This is how you go from "we have 60% ATT&CK coverage" to "we have 60% coverage, and the 40% we're missing exposes us to these specific attack paths, with this estimated risk, and here's what we'd need to close those gaps."

That's a conversation a CISO can actually use. Not another bingo card.

Detection Health. Are your existing detections still working? Are they firing? Are they producing true positives? Or have they degraded because the environment changed and nobody updated the rule? This ties back to the feedback loop. A detection you wrote six months ago might be useless today if the infrastructure changed.

Connecting the Dots

If you zoom out, the picture looks like this:

The AI SOC handles triage and investigation. It's fast, it's scalable, it works. But it only works as well as the detections feeding it. Garbage in, AI-powered garbage out.

The feedback loop takes what the AI learns during triage and feeds it back into detection engineering. It's continuous improvement. Your detections get better over time because you're learning from thousands of alert outcomes, not just the handful an analyst has time to review.

Building better detections from scratch takes the proactive approach. Instead of waiting for bad detections to generate noise, you start from threat intelligence and threat modeling. You map coverage, identify gaps, calculate risk, and build detections that actually matter for your specific environment.

The two paths complement each other. One fixes what you have. The other builds what you need.

And this is exactly the shift left that 2026 needs. We proved AI can triage. We proved it can investigate. Now we need to prove it can help us build better defenses from the start.

Vendor Spotlight: Spectrum Security

First platform tackling the agentic detection engineering problem. Details on how they approach the TI > Threat Model > Coverage > Risk workflow, their coverage mapping capabilities, and how they connect threat intelligence to actionable detection suggestions based on your actual environment and log sources.

Spectrum Security is tackling the part of the problem most of the market has worked around for years: detection itself.

While much of the industry focused on collecting more data, shipping more content, or accelerating triage, Spectrum starts from a more fundamental question: can you actually detect the threats that matter in your environment right now? That is the question their platform is built to answer.

The company’s thesis is that security teams do not suffer from a lack of telemetry. They suffer from inability to detect threats through all the telemetry - it’s like searching for a needle in a haystack. More logs do not automatically create coverage. More detections do not automatically reduce exposure. It’s about the need to have the right detections needed for your environment, and ensuring they remain effective as threats evolve and environments change. static dashboards or ATT&CK heat maps do not provide continuous confidence in a changing environment.

Spectrum is building around that gap.

Their approach connects the pieces security teams have historically managed in separate systems and spreadsheets: measuring and reporting on current coverage ,threat intelligence, threat relevance, telemetry availability, detection logic, detection authoring, and ongoing validation. In practice, that means taking threat information and mapping it to the customer’s real environment, understanding what data is actually available, identifying what is detectable versus what is not, and turning that into accurate detections, ensuring that they remain valid and tying this to measurable coverage outcomes. This is meant to replace manually-heavy detection engineering that struggles to keep pace, with something more continuous, contextual, and provable.

What makes the vision interesting is that Spectrum is not describing detection as a one-time engineering project. It is describing it as a living system. Environments change. Telemetry shifts. Threats evolve. Detection logic drifts. So the real problem is not just creating detections, but continuously knowing whether they still work, where gaps have opened, and what matters to fix next. That is the layer Spectrum…

If the first wave of AI in security helped analysts move faster once alerts that already existed, Spectrum is betting the more important shift is upstream: helping teams know what they should detect, what they can detect, and how to close the gap between the two

Final Thoughts

We spent the last two years optimizing the middle of the IR cycle. Investigation and triage are faster than ever. That's good progress. But faster triage doesn't fix bad detections. It just processes them quicker.

The shift left into detection engineering is where the real value is. Not because it's easy. It's actually the hardest part. But because everything downstream depends on it. Your AI SOC, your response automation, your coverage, your risk posture. All of it starts with whether you're detecting the right things in the first place.

Fix the input. The output takes care of itself.

Join as a top supporter of our blog to get special access to the latest content and help keep our community going.

As an added benefit, each Ultimate Supporter will receive a link to the editable versions of the visuals used in our blog posts. This exclusive access allows you to customize and utilize these resources for your own projects and presentations.